This article was authored by Marcus Woo and originally published to Nature

Fork in hand, a robot arm skewers a strawberry from above and delivers it to Tyler Schrenk’s mouth. Sitting in his wheelchair, Schrenk nudges his neck forward to take a bite. Next, the arm goes for a slice of banana, then a carrot. Each motion it performs by itself, on Schrenk’s spoken command.

For Schrenk, who became paralysed from the neck down after a diving accident in 2012, such a device would make a huge difference in his daily life if it were in his home. “Getting used to someone else feeding me was one of the strangest things I had to transition to,” he says. “It would definitely help with my well-being and my mental health.”

His home is already fitted with voice-activated power switches and door openers, enabling him to be independent for about 10 hours a day without a caregiver. “I’ve been able to figure most of this out,” he says. “But feeding on my own is not something I can do.” Which is why he wanted to test the feeding robot, dubbed ADA (short for assistive dexterous arm). Cameras located above the fork enable ADA to see what to pick up. But knowing how forcefully to stick a fork into a soft banana or a crunchy carrot, and how tightly to grip the utensil, requires a sense that humans take for granted: “Touch is key,” says Tapomayukh Bhattacharjee, a roboticist at Cornell University in Ithaca, New York, who led the design of ADA while at the University of Washington in Seattle. The robot’s two fingers are equipped with sensors that measure the sideways (or shear) force when holding the fork1. The system is just one example of a growing effort to endow robots with a sense of touch.

Part of Nature Outlook: Robotics and artificial intelligence

“The really important things involve manipulation, involve the robot reaching out and changing something about the world,” says Ted Adelson, a computer-vision specialist at the Massachusetts Institute of Technology (MIT) in Cambridge. Only with tactile feedback can a robot adjust its grip to handle objects of different sizes, shapes and textures. With touch, robots can help people with limited mobility, pick up soft objects such as fruit, handle hazardous materials and even assist in surgery. Tactile sensing also has the potential to improve prosthetics, help people to literally stay in touch from afar, and even has a part to play in fulfilling the fantasy of the all-purpose household robot that will take care of the laundry and dishes. “If we want robots in our home to help us out, then we’d want them to be able to use their hands,” Adelson says. “And if you’re using your hands, you really need a sense of touch.”

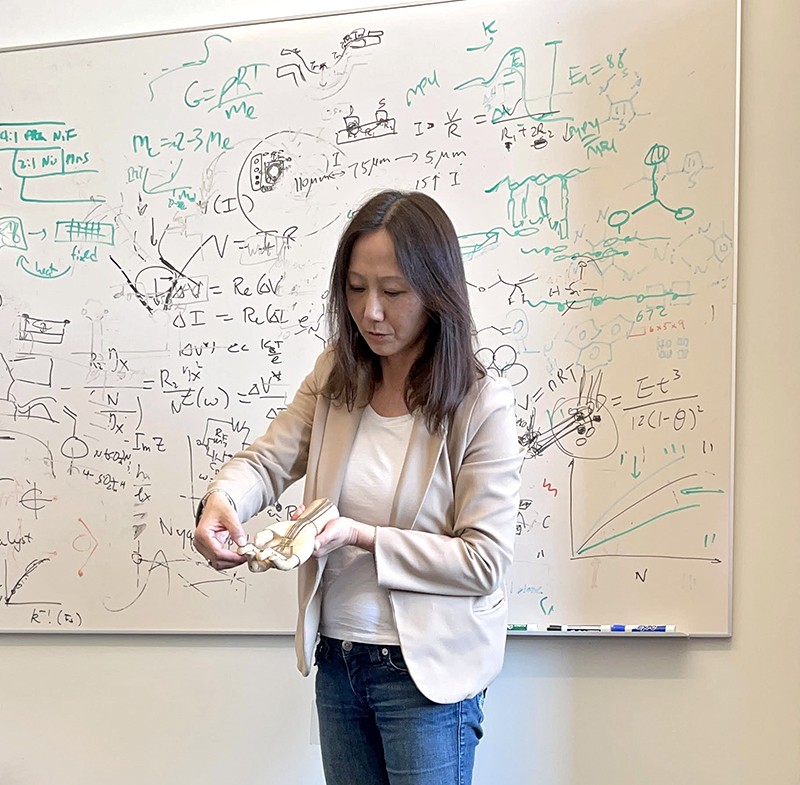

With this goal in mind, and buoyed by advances in machine learning, researchers around the world are developing myriad tactile sensors, from finger-shaped devices to electronic skins. The idea isn’t new, says Veronica Santos, a roboticist at the University of California, Los Angeles. But advances in hardware, computational power and algorithmic knowhow have energized the field. “There is a new sense of excitement about tactile sensing and how to integrate it with robots,” Santos says.

Feel by sight

One of the most promising sensors relies on well-established technology: cameras. Today’s cameras are inexpensive yet powerful, and combined with sophisticated computer vision algorithms, they’ve led to a variety of tactile sensors. Different designs use slightly different techniques, but they all interpret touch by visually capturing how a material deforms on contact.

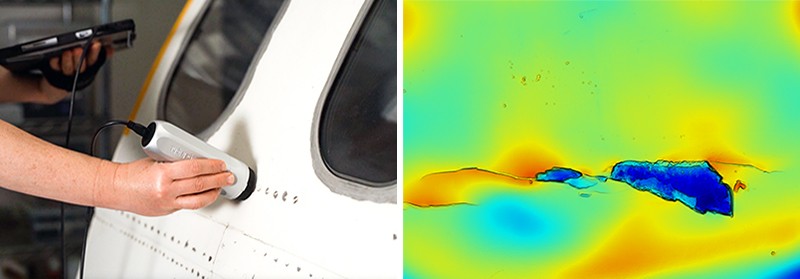

ADA uses a popular camera-based sensor called GelSight, the first prototype of which was designed by Adelson and his team more than a decade ago2. A light and a camera sit behind a piece of soft rubbery material, which deforms when something presses against it. The camera then captures the deformation with super-human sensitivity, discerning bumps as small as one micrometre. GelSight can also estimate forces, including shear forces, by tracking the motion of a pattern of dots printed on the rubbery material as it deforms2.

GelSight is not the first or the only camera-based sensor (ADA was tested with another one, called FingerVision). However, its relatively simple and easy-to-manufacture design has so far set it apart, says Roberto Calandra, a research scientist at Meta AI (formerly Facebook AI) in Menlo Park, California, who has collaborated with Adelson. In 2011, Adelson co-founded a company, also called GelSight, based on the technology he had developed. The firm, which is based in Waltham, Massachusetts, has focused its efforts on industries such as aerospace, using the sensor technology to inspect for cracks and defects on surfaces.

One of the latest camera-based sensors is called Insight, documented this year by Huanbo Sun, Katherine Kuchenbecker and Georg Martius at the Max Planck Institute for Intelligent Systems in Stuttgart, Germany3. The finger-like device consists of a soft, opaque, tent-like dome held up with thin struts, hiding a camera inside.

It’s not as sensitive as GelSight, but it offers other advantages. GelSight is limited to sensing contact on a small, flat patch, whereas Insight detects touch all around its finger in 3D, Kuchenbecker says. Insight’s silicone surface is also easier to fabricate, and it determines forces more precisely. Kuchenbecker says that Insight’s bumpy interior surface makes forces easier to see, and unlike GelSight’s method of first determining the geometry of the deformed rubber surface and then calculating the forces involved, Insight determines forces directly from how light hits its camera. Kuchenbecker thinks this makes Insight a better option for a robot that needs to grab and manipulate objects; Insight was designed to form the tips of a three-digit robot gripper called TriFinger.

Skin solutions

Camera-based sensors are not perfect. For example, they cannot sense invisible forces, such as the magnitude of tension of a taut rope or wire. A camera’s frame-rate might also not be quick enough to capture fleeting sensations, such as a slipping grip, Santos says. And squeezing a relatively bulky camera-based sensor into a robot finger or hand, which might already be crowded with other sensors or actuators (the components that allow the hand to move) can also pose a challenge.

This is one reason other researchers are designing flat and flexible devices that can wrap around a robot appendage. Zhenan Bao, a chemical engineer at Stanford University in California, is designing skins that incorporate flexible electronics and replicate the body’s ability to sense touch. In 2018, for example, her group created a skin that detects the direction of shear forces by mimicking the bumpy structure of a below-surface layer of human skin called the spinosum4.

When a gentle touch presses the outer layer of human skin against the dome-like bumps of the spinosum, receptors in the bumps feel the pressure. A firmer touch activates deeper-lying receptors found below the bumps, distinguishing a hard touch from a soft one. And a sideways force is felt as pressure pushing on the side of the bumps.

Bao’s electronic skin similarly features a bumpy structure that senses the intensity and direction of forces. Each one-millimetre bump is covered with 25 capacitors, which store electrical energy and act as individual sensors. When the layers are pressed together, the amount of stored energy changes. Because the sensors are so small, Bao says, a patch of electronic skin can pack in a lot of them, enabling the skin to sense forces accurately and aiding a robot to perform complex manipulations of an object.

To test the skin, the researchers attached a patch to the fingertip of a rubber glove worn by a robot hand. The hand could pat the top of a raspberry and pick up a ping-pong ball without crushing either.

Although other electronic skins might not be as sensor-dense, they tend to be easier to fabricate. In 2020, Benjamin Tee, a former student of Bao who now leads his own laboratory at the National University of Singapore, developed a sponge-like polymer that can sense shear forces5. Moreover, similar to human skin, it is self-healing: after being torn or cut, it fuses back together when heated and stays stretchy, which is useful for dealing with wear and tear.

The material, dubbed AiFoam, is embedded with flexible copper wire electrodes, roughly emulating how nerves are distributed in human skin. When touched, the foam deforms and the electrodes squeeze together, which changes the electrical current travelling through it. This allows both the strength and direction of forces to be measured. AiFoam can even sense a person’s presence just before they make contact — when their finger comes within a few centimetres, it lowers the electric field between the foam’s electrodes.

Last November, researchers at Meta AI and Carnegie Mellon University in Pittsburgh, Pennsylvania, announced a touch-sensitive skin comprising a rubbery material embedded with magnetic particles6. Dubbed ReSkin, when it deforms the particles move along with it, changing the magnetic field. It is designed to be easily replaced — it can be peeled off and a fresh skin installed without requiring complex recalibration — and 100 sensors can be produced for less than US$6.

Rather than being universal tools, different skins and sensors will probably lend themselves to particular purposes. Bhattacharjee and his colleagues, for example, have created a stretchable sleeve that fits over a robot arm and is useful for sensing incidental contact between a robotic arm and its environment7. The sheet is made from layered fabric that detects changes in electrical resistance when pressure is applied to it. It can’t detect shear forces, but it can cover a broad area and wrap around a robot’s joints.

Bhattacharjee is using the sleeve to identify not just when a robotic arm comes into contact with something as it moves through a cluttered environment, but also what it bumps up against. If a helper robot in a home brushed against a curtain while reaching for an object, it might be fine for it to continue, but contact with a fragile wine glass would require evasive action.

Other approaches use air to provide a sense of touch. Some robots use suction grippers to pick up and move objects in warehouses or in the oceans. In these cases, Hannah Stuart, a mechanical engineer at the University of California, Berkeley, is hoping that measuring suction airflow can provide tactile feedback to a robot. Her group has shown that the rate of airflow can determine the strength of the suction gripper’s hold and even the roughness of the surface it is suckered on to8. And underwater, it can reveal how an object moves while being held by a suction-aided robot hand9.

Processing feelings

Today’s tactile technologies are diverse, Kuchenbecker says. “There are multiple feasible options, and people can build on the work of others,” she says. But designing and building sensors is only the start. Researchers then have to integrate them into a robot, which must then work out how to use a sensor’s information to execute a task. “That’s actually going to be the hardest part,” Adelson says.

For electronic skins that contain a multitude of sensors, processing and analysing data from them all would be computationally and energy intensive. To handle so many data, researchers such as Bao are taking inspiration from the human nervous system, which processes a constant flood of signals with ease. Computer scientists have been trying to mimic the nervous system with neuromorphic computers for more than 30 years. But Bao’s goal is to combine a neuromorphic approach with a flexible skin that could integrate with the body seamlessly — for example, on a bionic arm.

Sign up for Nature’s newsletter on robotics and AI

Unlike in other tactile sensors, Bao’s skins deliver sensory signals as electrical pulses, such as those in biological nerves. Information is stored not in the intensity of the pulses, which can wane as a signal travels, but instead in their frequency. As a result, the signal won’t lose much information as the range increases, she explains.

Pulses from multiple sensors would meet at devices called synaptic transistors, which combine the signals into a pattern of pulses — similar to what happens when nerves meet at synaptic junctions. Then, instead of processing signals from every sensor, a machine-learning algorithm needs only to analyse the signals from several synaptic junctions, learning whether those patterns correspond to, say, the fuzz of a sweater or the grip of a ball.

In 2018, Bao’s lab built this capability into a simple, flexible, artificial nerve system that could identify Braille characters10. When attached to a cockroach’s leg, the device could stimulate the insect’s nerves — demonstrating the potential for a prosthetic device that could integrate with a living creature’s nervous system.

Ultimately, to make sense of sensor data, a robot must rely on machine learning. Conventionally, processing a sensor’s raw data was tedious and difficult, Calandra says. To understand the raw data and convert them into physically meaningful numbers such as force, roboticists had to calibrate and characterize the sensor. With machine learning, roboticists can skip these laborious steps. The algorithms enable a computer to sift through a huge amount of raw data and identify meaningful patterns by itself. These patterns — which can represent a sufficiently tight grip or a rough texture — can be learnt from training data or from computer simulations of its intended task, and then applied in real-life scenarios.

“We’ve really just begun to explore artificial intelligence for touch sensing,” Calandra says. “We are nowhere near the maturity of other fields like computer vision or natural language processing.” Computer-vision data are based on a two-dimensional array of pixels, an approach that computer scientists have exploited to develop better algorithms, he says. But researchers still don’t fully know what a comparable structure might be for tactile data. Understanding the structure for those data, and learning how to take advantage of them to create better algorithms, will be one of the biggest challenges of the next decade.

Barrier removal

The boom in machine learning and the variety of emerging hardware bodes well for the future of tactile sensing. But the plethora of technologies is also a challenge, researchers say. Because so many labs have their own prototype hardware, software and even data formats, scientists have a difficult time comparing devices and building on one another’s work. And if roboticists want to incorporate touch sensing into their work for the first time, they would have to build their own sensors from scratch — an often expensive task, and not necessarily in their area of expertise.

This is why, last November, GelSight and Meta AI announced a partnership to manufacture a camera-based fingertip-like sensor called DIGIT. With a listed price of $300, the device is designed to be a standard, relatively cheap, off-the-shelf sensor that can be used in any robot. “It definitely helps the robotics community, because the community has been hindered by the high cost of hardware,” Santos says.

Depending on the task, however, you don’t always need such advanced hardware. In a paper published in 2019, a group at MIT led by Subramanian Sundaram built sensors by sandwiching a few layers of material together, which change electrical resistance when under pressure11. These sensors were then incorporated into gloves, at a total material cost of just $10. When aided by machine learning, even a tool as simple as this can help roboticists to better understand the nuances of grip, Sundaram says.

Not every roboticist is a machine-learning specialist, either. To aid with this, Meta AI has released open source software for researchers to use. “My hope is by open-sourcing this ecosystem, we’re lowering the entry bar for new researchers who want to approach the problem,” Calandra says. “This is really the beginning.”

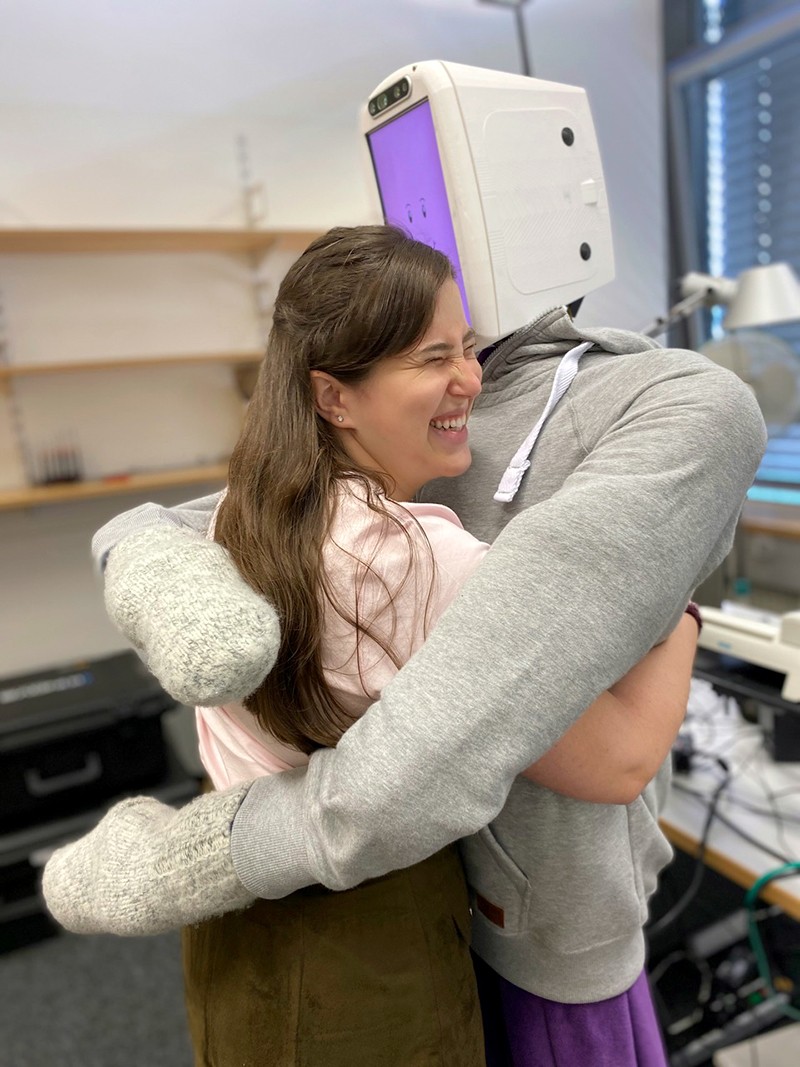

Although grip and dexterity continue to be a focus of robotics, that’s not all tactile sensing is useful for. A soft, slithering robot, might need to feel its way around to navigate rubble as part of search and rescue operations, for instance. Or a robot might simply need to feel a pat on the back: Kuchenbecker and her student Alexis Block have built a robot with torque sensors in its arms and a pressure sensor and microphone inside a soft, inflatable body that can give a comfortable and pleasant hug, and then release when you let go. That kind of human-like touch is essential to many robots that will interact with people, including prosthetics, domestic helpers and remote avatars. These are the areas in which tactile sensing might be most important, Santos says. “It’s really going to be the human–robot interaction that’s going to drive it.”

So far, robotic touch is confined mainly to research labs. “There’s a need for it, but the market isn’t quite there,” Santos says. But some of those who have been given a taste of what might be achievable are already impressed. Schrenk’s tests of ADA, the feeding robot, provided a tantalizing glimpse of independence. “It was just really cool,” he says. “It was a look into the future for what might be possible for me.”

doi: https://doi.org/10.1038/d41586-022-01401-y

This article is part of Nature Outlook: Robotics and artificial intelligence, an editorially independent supplement produced with the financial support of third parties. About this content.

References

- Song, H., Bhattacharjee, T. & Srinivasa, S. S. 2019 International Conference on Robotics and Automation 8367–8373 (IEEE, 2019).Google Scholar

- Yuan, W., Dong, S. & Adelson, E. H. Sensors 17, 2762 (2017).Article Google Scholar

- Sun, H., Kuchenbecker, K. J. & Martius, G. Nature Mach. Intell. 4, 135–145 (2022).Article Google Scholar

- Boutry, C. M. et al. Sci. Robot. 3, aau6914 (2018).Article Google Scholar

- Guo, H. et al. Nature Commun. 11, 5747 (2020).PubMed Article Google Scholar

- Bhirangi, R., Hellebrekers, T., Majidi, C. & Gupta, A. Preprint at http://arxiv.org/abs/2111.00071 (2021).

- Wade, J., Bhattacharjee, T., Williams, R. D. & Kemp, C. C. Robot. Auton. Syst. 96, 1–14 (2017).Article Google Scholar

- Huh, T. M. et al. 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems 1786–1793 (IEEE, 2021).Google Scholar

- Nadeau, P., Abbott, M., Melville, D. & Stuart, H. S. 2020 IEEE International Conference on Robotics and Automation 3701–3707 (IEEE, 2020).Google Scholar

- Kim, Y. et al. Science 360, 998–1003 (2018).PubMed Article Google Scholar

- Sundaram, S. et al. Nature 569, 698–702 (2019).PubMed Article Google Scholar