This article was authored by Liam Drew and originally published to Nature

Markus Möllmann-Bohle’s left cheek hides a secret that has changed his life. Under the skin, nestled among the nerve fibres that allow him to feel and move his face, is a miniature radio receiver and six tiny electrodes. “I’m a cyborg,” he says, with a chuckle.

This electronic device lies dormant much of the time. But, when Möllmann-Bohle feels pressure starting to gather around his left eye, he retrieves a black plastic wand about the size of a mobile phone, pushes a button and fixes it against his face in a home-made sling. The remote vibrates for a moment, then launches high-frequency radio waves into his cheek.

In response, the implant fires a sequence of electrical pulses into a bundle of nerve cells called the sphenopalatine ganglion. By disrupting these neurons, the device spares 57-year-old Möllmann-Bohle the worst of the agonizing cluster headaches that have plagued him for decades. He uses the implant several times a day. “I need this device to live a good life,” he says.

Cluster headaches are rare, but extraordinarily painful. People are typically affected for life and treatment options are very limited. Möllmann-Bohle experienced his first in 1987 at the age of 22. For decades, he managed sporadic headaches with a mix of painkillers and migraine medication. But in 2006, his condition became chronic, and he would be struck with as many as eight hour-long cluster headaches every day. “I was forced to succumb to the pain again and again,” he says. “I was kept from living my life.”

“I was forced to succumb to the pain again and again. I was kept from living my life.”

Möllmann-Bohle, evermore reliant on painkillers and now also taking antidepressants, was hospitalized numerous times. During one of these stays, however, he heard about an electronic implant that some people had started using to control their cluster headaches.

Developed by the start-up Autonomic Technologies (known as ATI) in San Francisco, California, the device had passed a series of placebo-controlled clinical trials with flying colours. “It worked remarkably well,” says Arne May, a neurologist at the University of Hamburg in Germany who led some of those trials on behalf of the start-up. In most people, stimulation reduced the pain of an attack, made attacks less frequent, or both1. Side effects were rare. In February 2012, while US trials continued, the European Medicines Agency granted the company approval to market the device across Europe.

Möllmann-Bohle contacted May, and travelled from his home near Düsseldorf, Germany, to meet him. Filled with hope that this might alleviate his suffering, Möllmann-Bohle underwent surgery to have the device fitted in 2013.

The implant was a revelation. After the pattern and strength of the stimulation had been tailored to Möllmann-Bohle’s needs, around an hour’s use five or six times a day was enough to prevent attacks from becoming debilitating. “I was reborn,” he says.

But, by the end of 2019, ATI had collapsed. The company’s closure left Möllmann-Bohle and more than 700 other people alone with a complex implanted medical device. People using the stimulator and their physicians could no longer access the proprietary software needed to recalibrate the device and maintain its effectiveness. Möllmann-Bohle and his fellow users now faced the prospect of the battery in the hand-held remote wearing out, robbing them of the relief that they had found. “I was left standing in the rain,” Möllmann-Bohle says.

A systemic problem

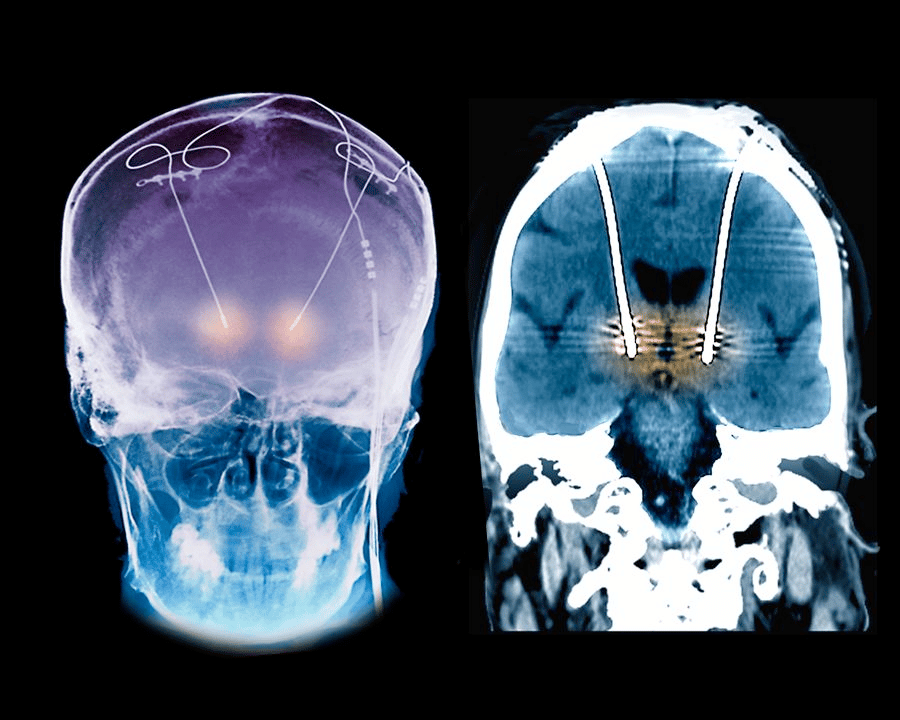

Hundreds of thousands of people benefit from implanted neurotechnology every day. Among the most common devices are spinal-cord stimulators, first commercialized in 1968, that help to ease chronic pain. Cochlear implants that provide a sense of hearing, and deep-brain stimulation (DBS) systems that quell the debilitating tremor of Parkinson’s disease, are also established therapies.

Encouraged by these successes, and buoyed by advances in computing and engineering, researchers are trying to develop evermore sophisticated devices for numerous other neurological and psychiatric conditions. Rather than simply stimulating the brain, spinal cord or peripheral nerves, some devices now monitor and respond to neural activity.

For example, in 2013, the US Food and Drug Administration approved a closed-loop system for people with epilepsy. The device detects signs of neural activity that could indicate a seizure and stimulates the brain to suppress it. Some researchers are aiming to treat depression by creating analogous devices that can track signals related to mood. And systems that allow people who have quadriplegia to control computers and prosthetic limbs using only their thoughts are also in development and attracting substantial funding.

The market for neurotechnology is predicted to expand by around 75% by 2026, to US$17.1 billion. But as commercial investment grows, so too do the instances of neurotechnology companies giving up on products or going out of business, abandoning the people who have come to depend on their device.

Shortly after the demise of ATI, a company called Nuvectra, which was based in Plano, Texas, filed for bankruptcy in 2019. Its device — a new kind of spinal-cord stimulator for chronic pain — had been implanted in at least 3,000 people. In 2020, artificial-vision company Second Sight, in Sylmar, California, laid off most of its workforce, ending support for the 350 or so people who were using its much heralded retinal implant to see. And in June, another manufacturer of spinal-cord stimulators — Stimwave in Pompano Beach, Florida — filed for bankruptcy. The firm has been bought by a credit-management company and is now embroiled in a legal battle with its former chief executive. Thousands of people with the stimulator, and their physicians, are watching on in the hope that the company will continue to operate.

When the makers of implanted devices go under, the implants themselves are typically left in place — surgery to remove them is often too expensive or risky, or simply deemed unnecessary. But without ongoing technical support from the manufacturer, it is only a matter of time before the programming needs to be adjusted or a snagged wire or depleted battery renders the implant unusable.

People are then left searching for another way to manage their condition, but with the added difficulty of a non-functional implant that can be an obstacle both to medical imaging and future implants. For some people, including Möllmann-Bohle, no clear alternative exists.

“It’s a systemic problem,” says Jennifer French, executive director of Neurotech Network, a patient advocacy and support organization in St. Petersburg, Florida. “It goes all the way back to clinical trials, and I don’t think it’s received enough attention.”

As money pours into the neurotechnology sector, implant recipients, physicians, biomedical engineers and medical ethicists are all calling for action to protect people with neural implants. “Unfortunately, with that kind of investment, come failures,” says Gabriel Lázaro-Muñoz, an ethicist specializing in neurotechnology at Harvard Medical School in Boston, Massachusetts. “We need to figure out a way to minimize the harms that patients will endure because of these failures.”

Left to their own devices

When Möllmann-Bohle had the ATI-made neurostimulator implanted to help with his cluster headaches, he agreed to participate in a five-year post-approval trial aimed at refining the device. He diligently provided ATI with data from his device and answered questionnaires about his progress. Every few months he made an 800-kilometre round trip to Hamburg to be assessed.

But four years in, the company running the trial on behalf of ATI called Möllmann-Bohle to tell him it was over. Rumours spread that the firm was in trouble, before a letter from May confirmed his fears — ATI had gone out of business.

Timothy White, another recipient of the company’s stimulator who took part in the post-approval trial, also heard of ATI’s closure second-hand.

Now head of clinical affairs for a medical-device company based near Frankfurt, White credits the device with allowing him to complete his medical training. Indeed, ATI had seized on this eloquent medical student’s enthusiasm for its technology and asked him to speak at conferences and to investors.

Yet even White heard about the company’s collapse only when he contacted May with concerns that his remote control might be under-performing.

“That was really rough for me,” says White. “I was asking myself, what’s going to happen if I lose my remote control, if it breaks down, or the battery dies. But no one really had answers.”

When an implant manufacturer disappears, what happens to the people using its devices varies hugely.

In some cases, there will be alternatives available. When Nuvectra folded, for example, users of its spinal-cord stimulator who feared a resurgence of their chronic pain could turn to similar devices offered by more established companies.

Even this best-case scenario puts considerable strain on the people using the implants, many of whom are already vulnerable, says anaesthesiologist Anjum Bux. He estimates that around 70 people received the Nuvectra device at his pain-management clinics in Kentucky.

Replacing obsolete implants of this kind requires surgery that would otherwise have been unnecessary and takes weeks to recover from. And at around US$40,000 for the surgery and replacement device, it’s also costly — although Bux says that in his experience, insurance providers have picked up the tab.

A greater challenge arises when no ready replacement is available. The stimulator made by ATI that Möllmann-Bohle and White have was the first of its kind. When the manufacturer closed its doors, there was no other implant on the market that they could use to manage their cluster headaches.

Left to fend for themselves, White and Möllmann-Bohle each leant on their own professional expertise. White drew on his medical training and found a drug, developed for treating migraines, that suppresses his headaches. But he must take triple the recommended dose, and worries about potential long-term side effects.

Möllmann-Bohle, meanwhile, turned to skills he developed as an electrical engineer. In the past three years, he has repaired a faulty charging port on the hand-held portion of his device and replaced its inbuilt battery several times. This battery was never intended to be accessible to the user, and it turned out to be unusual. Möllmann-Bohle scoured the Internet and eventually found suitable replacements made by a firm in the United States. When he returned for more, however, he learnt that the company had stopped making them. His most recent replacement came from a Chinese company that custom made what he needed.

His tinkering brought him into conflict with his insurers, who initially advised him not to tamper with the device, but eventually agreed to foot the bill for the replacement parts, after he convinced them he was suitably qualified. “They put really big obstacles in my way, or at least they tried to,” Möllmann-Bohle says. But although his repairs have been successful so far, he knows that he does not have the tools or skills to fix everything that could go wrong.

Although maintaining the device has been tough, Möllmann-Bohle cannot see an alternative. “There is still no medication reliable enough to help me live a pain-free life without the device,” he says.

He and White are now placing much of their hope in the potential revival of ATI’s stimulator technology. In late 2020, a company now called Realeve, based in Effingham, Illinois, announced that it had acquired the patents for the device. The new company intends to market an essentially identical successor device in both the United States and Europe. In April 2021, Realeve attained FDA breakthrough status, which is intended to speed up access to medical devices in the United States.

Möllmann-Bohle and White both approached Realeve earlier this year, and corresponded with then-chief executive Jon Snyder directly, asking for assistance with their implants. So far, they have received none. In an e-mail to Nature in July, Snyder said: “Since we do not have FDA or CE mark approval yet, we are unable to market the therapy and provide support. However, we have investigated the options of providing support via compassionate use approvals in various markets.”

Möllmann-Bohle desperately wants this support to materialize. “He [Snyder] assured me that he and his staff are working on providing replacement parts,” he says. There have been changes at Realeve in recent months, with Snyder departing and a consulting firm taking temporary control of the business. But interim chief executive Peter Donato says that the company has now gained approval in Denmark to distribute replacement devices and software to existing users. He hopes that it can begin deliveries in the latter half of 2023, and says that it is also in talks with three other European countries. For Möllmann-Bohle and others in Germany, the wait goes on. “This new start has been in the making for years now,” he says.

“This new start has been in the making for years now. I’m hopeful, but I’m also a realist.”

A commitment to care

Examples of makers supporting implanted neurotechnology when profits fail to materialize are few and far between. French can therefore consider herself one of the lucky ones.

As well as being a prominent advocate for neurotechnology, she has been using an implanted device to help her move for more than 20 years — even though the life-changing technology never became the foundation of a viable business.

In 1999, two years after a snowboarding accident left her unable to move her legs, French enrolled in a clinical trial of an electrical implant system designed by Ronald Triolo, a biomedical engineer at Case Western Reserve University in Cleveland, Ohio.

Over seven and a half hours, surgeons placed 16 electrodes in her body, each of which could stimulate a nerve that runs to her leg muscles. These electrodes were connected to an implanted pulse generator, which is wirelessly powered and controlled by an external unit.

Initially, the implant allowed French to stand and move herself between her wheelchair and a bed or a car. Over time, more electrodes and controllers have been added. Now she can stand and step, and pedal a stationary bike. “I use it on a daily basis for exercise, for standing, for function,” she says.

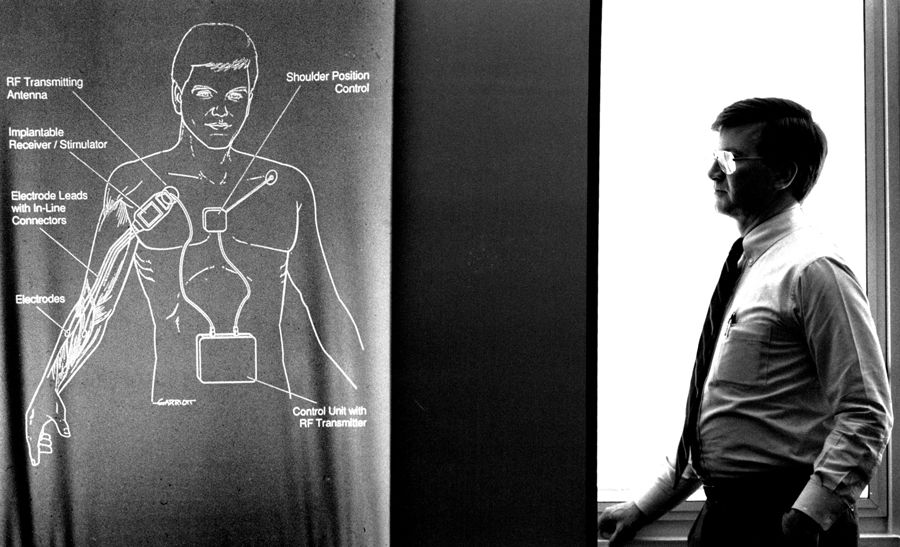

Although the device was not commercially available at the time French joined the trial, Triolo expected it wouldn’t be long before it was — a similar system developed at Case Western for restoring functional hand and arm movement, known as Freehand, had been brought to market by a local start-up in 1997.

But this did not come to pass. Despite the difference it has made to French’s life, the device she uses has never been commercialized. The company that had acquired the rights to the Freehand system shuttered in 2001, and no other company picked up the device. Freehand’s developer, biomedical engineer Hunter Peckham also at Case Western, attributes the start-up’s failure to impatient investors. “The uptake was not as fast as they would have liked,” he says.

Around 350 people with Freehand devices, as well as French and her fellow participants in Triolo’s lower-body implant trial, could have lost access to the technology that had become an integral part of their lives. But Peckham and Triolo refused to let this happen.

“We understood that if there was something that they were benefiting from, if you took that away that would be another loss for them — when they had had such a devastating loss before,” Peckham says.

Using old and dwindling stocks of components — including items that the university had acquired after the demise of the Freehand manufacturer — and tapping into money from academic grants, the researchers continue to support as many people with these devices as they can.

Over two decades, the Freehand devices have been repaired as they gradually failed, and funding for a succession of further fixed-term clinical trials has allowed Triolo to continue to support French and her fellow research participants. He has even been able to offer them upgrades over time. French’s system has failed four times, leaving her unable to stand and acutely aware of her reliance on the technology. Every time, the Case Western team has provided the surgery and parts required to restore her movement.

“Someone is dedicating their body to our research. We have an obligation to maintain their systems for as long as they want to use them.”

French knows her situation is precarious and that it rests on Triolo continuing to attract funding. “I live every day with the fact that this technology might go away,” she says. But she takes heart in what she sees as the researchers’ unwavering commitment to her.

“Our world view,” Triolo says, “is someone is dedicating their body to help advance our research, and we have an obligation to them to maintain their systems for as long as they want to use them.”

Protection from failure

Konstantin Slavin is a neurosurgeon at the University of Illinois College of Medicine in Chicago, who contributed to clinical trials of ATI’s cluster-headache device and implanted the spinal-cord stimulator made by Nuvectra. He thinks that anyone given an implanted device as a part of routine clinical care should be able to count on ongoing support. “You expect them to receive essentially lifelong care from the device manufacturer,” he says.

He is not alone in this view — every device user, physician and engineer Nature interviewed thinks that people need to be better protected from the failure of device makers.

“You expect them to receive essentially lifelong care from the device manufacturer.”

One proposal is that neurotechnology companies should ensure that there is money available to support the people using their devices in the event of the company’s closure. How this would best be achieved is uncertain. Suggestions include the company setting up a partner non-profit organization to manage funds to cover this eventuality; putting aside money in an escrow account; being obliged to take out an insurance policy that would support users; paying into a government-supported safety network; or ensuring the people using the devices are high-priority creditors during bankruptcy proceedings.

Currently, there is little sign that device makers are taking this kind of action. Asked in July if Realeve had plans in place to protect people should its business go the same way as ATI, Snyder, then chief executive, replied: “There is always the risk that a company may stop operating, but our focus is to be successful in our effort to deliver the Realeve Pulsante therapy to patients”.

Realeve’s interim chief executive Donato thinks that it will take legislation to convince investors or shareholders in companies to take on the expense of a safety net. “Unless, and until, the governments force it on us,” he says, “I’m not sure companies will do it on their own.” But Triolo is optimistic that manufacturers might think differently if the jeopardy faced by device users becomes more widely known, and physicians and prospective patients start to favour companies that do have a safety net in place. “If that is what it takes to have a competitive advantage, maybe that’ll be enlightening for our friends on the commercial side of things,” Triolo says.

Indeed, the failures of various neurotechnology start-ups over the past few years are already causing the surgeons responsible for implanting the devices to be cautious.

Robert Levy, a neurosurgeon in Boca Raton, Florida, and a former president of the International Neuromodulation Society, was particularly burnt by the demise of Nuvectra. He had been sufficiently impressed with its technology to become chairman of the company’s medical advisory board in August 2016. But in 2019, around five months before Nuvectra filed for bankruptcy, he cut ties after what he and others formerly associated with the firm saw as the company side-lining the needs of people using the implant in its attempt to stay afloat. “All of us who had any association with the company at that time expressed our severe dissatisfaction with such a move, which we felt was unethical,” Levy says.

“Making patients the victims of bad business practices or a bankruptcy is horrible for them, horrible for the field, and grossly unethical.”

From now on, Levy requires any new company that asks him to implant its product to send him a letter guaranteeing support for the people who have the surgery should something happen to the business. “If they should not supply such a letter, they’re not going to be included in my practice,” he says.

He plans to write an editorial arguing for this approach in the journal Neuromodulation, of which he is editor-in-chief, to further raise awareness and put pressure on neurotechnology companies. “Patients are suffering terribly,” he says. “Making them the victims of bad business practices or a bankruptcy is horrible for patients, horrible for the field and grossly unethical.”

Momentum is also building behind another way to protect people with implants: technical standardization. The electrodes, connectors, programmable circuits and power supplies used in implanted neurotechnology are often proprietary or otherwise difficult to source, as Möllmann-Bohle discovered when looking for replacement parts for his stimulator. If components were common across devices, one manufacturer might be able to step in and offer spares when another goes under.

A 2021 survey of surgeons who implant neurostimulators showed that 86% backed standardization of the connectors used by these devices2. Such a move would not be without precedent, says retired neurosurgeon and medical-device engineer Richard North, formerly at Johns Hopkins Medical School in Baltimore, and president of the Institute of Neuromodulation in Chicago, who led the survey. Cardiac pacemakers have included standardized elements since the early 1990s, when manufacturers voluntarily agreed to ensure that any company’s power supply could fuel a pacemaker from any other company. Many of those same companies are now the biggest names in spinal-cord stimulators and DBS systems.

“It’s inevitable that there will be standardization, and I think the companies involved recognize that too.”

North now co-chairs a Connector Standards Committee for the North American Neuromodulation Society, of which the Institute of Neuromodulation is a part, that is promoting the idea. Although the industry has not raced to embrace further standardization, he thinks it is only a matter of time. “It’s inevitable that there will be standardization, and I think the companies involved recognize that too,” he says. As well as making replacement components easier to come by, North thinks that standardization would boost innovation by encouraging companies to develop components that can be used with a wide range of existing systems.

Peckham hopes that the neurotechnology field can go even further — he wants devices to be made open source. Under the auspices of the Institute for Functional Restoration, a non-profit organization that he and his colleagues at Case Western established in 2013, Peckham plans to make the design specifications and supporting documentation of new implantable technologies developed by his team freely available. “Then people can just cut and paste,” he says.

This marks a major departure from the proprietary nature of most current devices. Peckham hopes that other people will build on the technology, and potentially even adapt it for new indications. The benefits for the people using these devices are at the centre of his thinking. “It starts with a commitment to the patients, to the people who can benefit from this,” he says.

It is exactly that sort of commitment that people such as Möllmann-Bohle, White and French want to see — and which they think they are entitled to. A raft of new companies are developing evermore sophisticated neurological implants with the power to transform people’s lives. Should any fail, it is the people using the devices, and their physicians, who will be most affected, says Triolo.

The recent run of commercial casualties demonstrates the human cost of abandoning neurotechnology. “It’s impossible,” Triolo says, “for people not to know that this is becoming a bigger and bigger issue.”

References

- J. Schoenen et al. Cephalalgia 33, 816–830 (2013). Article

- R. B. North et al. Neuromodulation 24, 1299–1306 (2021). Article

Author: Liam Drew

Design: Chris Ryan

Video: Josh Birt, Colin Kelly, Adam Levy

Original photography: Nyani Quarmyne

Audio: Adam Levy

Multimedia editors: Adam Levy, Dan Fox

Photo editors: Jessica Hallett, Madeline Hutchinson

Translation: Shaya Zarrin

Subeditor: Jenny McCarthy

Project manager: Rebecca Jones

Editor: Richard Hodson

This article was authored by Neil Savage and originally published to Nature

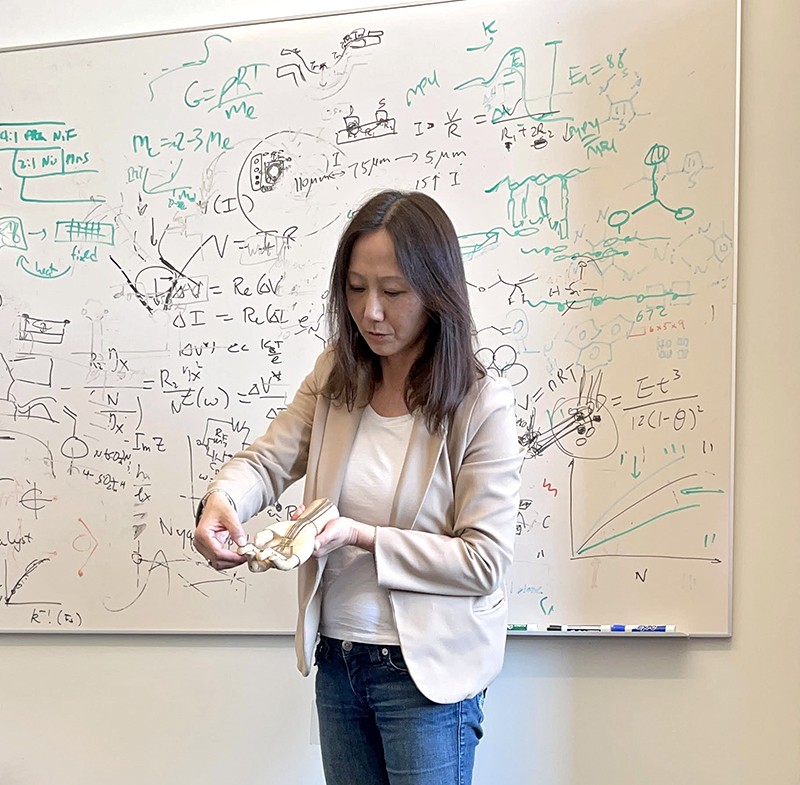

Inspiration can come from anywhere. For Radhika Nagpal, it came from her honeymoon.

Nagpal was snorkelling in the Bahamas when she was approached by a school of colourful striped fish, moving as one. “They come straight at you and check you out and then move off,” says Nagpal, now a mechanical engineer at Princeton University in New Jersey. “I was like, ‘Wow, that is a collective behaviour that I’ve never seen.’”Part of Nature Outlook: Robotics and artificial intelligence

Her mind returned to those curious fish years later, when she was pondering ways to build swarms of robots that could coordinate their behaviour in challenging environments. The result is a school of robotic fish — called Bluebots — that can coordinate their activity with their fellows1.

Nagpal’s school is small, only ten fish with limited abilities. The fish are equipped with blue LEDs so that their comrades can spot them underwater. Simple rules in their programming, such as swimming to the left when they see another Bluebot, enable them to synchronize their movement. But Nagpal hopes to eventually build larger collectives with more complex behaviours.

Such robotic schools could be tasked with locating and recording data on coral reefs to help researchers to study the reefs’ health over time. Just as living fish in a school might engage in different behaviours simultaneously — some mating, some caring for young, others finding food — but suddenly move as one when a predator approaches, robotic fish would have to perform individual tasks while communicating to each other when it’s time to do something different.

“The majority of what my lab really looks at is the coordination techniques — what kinds of algorithms have evolved in nature to make systems work well together?” she says.

Many roboticists are looking to biology for inspiration in robot design, particularly in the area of locomotion. Although big industrial robots in vehicle factories, for instance, remain anchored in place, other robots will be more useful if they can move through the world, performing different tasks and coordinating their behaviour.

Some robots can already move on wheels, but wheeled robots cannot climb stairs and are stymied by rough or shifting terrain, such as sand or gravel. By borrowing movement strategies from nature — walking, crawling, swimming, slithering, flying or leaping — robots could gain new functionality. They might perform search-and-rescue operations after an earthquake, or explore caves that are too small or unstable for people to venture into. They could carry out underwater inspections of ships and bridges. And unmanned aerial vehicles (UAVs) could fly more efficiently and better handle turbulence.

“The basic idea is looking to nature to see how things can potentially be done differently, how we can improve our automated systems,” says Michael Tolley, a mechanical engineer who heads the Bioinspired Robotics and Design Lab at the University of California, San Diego.

See Spot run

Perhaps the most obvious strategy for robotic motion is walking, and legged robots do exist. Spot, a low-slung, four-legged machine that looks like a headless yellow dog, can climb uphill and navigate stairs. Its developer, Boston Dynamics in Waltham, Massachusetts, markets the US$74,500 device for mobile inspection of factories, construction sites and hazardous environments. A similar-looking robot, the Mini Cheetah, has been developed at the Massachusetts Institute of Technology (MIT) in Cambridge. “More than 90% of land animals are quadruped,” says Sangbae Kim, a mechanical engineer at MIT who helped to design the Mini Cheetah. “So a natural place to look at is the quadrupedal world. And the cheetah is a king of that world in terms of the speed.”Sign up for Nature’s newsletter on robotics and AI

The Mini Cheetah can already perform backflips, and it runs as fast as 3.9 metres per second — about one-tenth as fast as an actual cheetah, but speedy for a robot. Now Kim is developing control software that he hopes will allow the robot to move smoothly across varying surfaces. This is challenging because the rules for how best to move a limb vary depending on the friction and hardness of the surface. Currently, moving from grass to concrete, or running up a gravelly hill, can cause the robot to stumble. “It runs really ugly and awkward,” Kim says. “It doesn’t fall, but it’s not efficient.”

Nevertheless, quadruped robots are one of the better options for negotiating difficult terrain, says J. Sean Humbert, a mechanical engineer who directs the Bio-Inspired Perception and Robotics Laboratory at the University of Colorado, Boulder. Last year, his group took part in the US Defense Advanced Research Projects Agency’s Subterranean Challenge, in which robots were tasked with navigating tunnels, caves and urban settings to find particular targets; the team took third place, winning $500,000. “The robots that ended up doing really well across the teams were the legged robots,” Humbert says. But faced with a sandy, uphill, rocky landscape, these robots struggled. “Even our Spot robot tipped over and slid around,” he says.

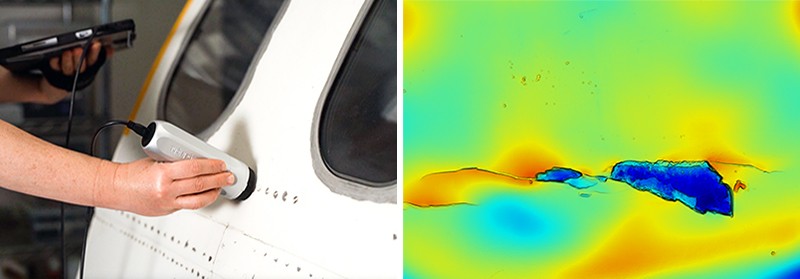

Feel the strain

One possible solution, Humbert says, is to endow robots with animals’ innate ability to sense and respond to mechanosensory information, such as pressure, strain or vibration. He’s been taking that approach with flying machines by embedding strain sensors in the wings of fixed-wing UAVs, as well as in the arms of quadrotor drones, which rely on spinning blades to fly and hover.

The work grew out of studies of honey bees. When Humbert placed bees in a wind tunnel and hit them with sudden gusts of air, their flight would be momentarily disturbed. After a quick change in the pattern of their wing beats, they would right themselves. Honey bees beat their wings 251 times per second, and the animals could make these corrections in just 15 to 20 beats — about 0.08 seconds. “Our conclusion was that [that] had to be mechanosensory information,” Humbert says. “Vision is just not fast enough to correct the spins that we’re seeing.” If a drone could similarly sense a disturbance and automatically correct for it that rapidly, he says, it would be much less likely to crash or be knocked off course.

Fish also respond to mechanosensory stimuli, using a system of sensory organs known as the lateral line. The structure consists of hundreds of tiny sensors spread along the head, trunk and tail fin, and it enables fish to sense changes in the motion and pressure of water caused by obstacles, such as rocks and other animals. “Fish are sensing all of that and are using that, as well as vision, to position themselves relative to each other,” Nagpal says. No comparable underwater pressure sensor exists, but her team hopes to develop one to improve the Bluebots’ navigation.

In San Diego, Tolley is exploring robots built from polymers or other pliable materials that can more safely interact with humans or squeeze through tight spaces. Squishy, pliable robots could have more flexible motion than hard robots with only a few joints, but getting them to walk on soft legs is a challenge.

Tolley designed a robot with four soft legs, each divided into three chambers2. Pressurized air first enters one chamber, then moves to the next. This movement causes the legs to bend, then relax. By alternatively activating opposing pairs of legs, the robot trundles along like a turtle. And because it does not need electronic controls, its design could be useful even in the presence of electromagnetic interference.

Hard or soft, one issue robots struggle with is falling over. If a multimillion-dollar robot trips over a rock on Mars, an entire mission could be jeopardized. Some researchers are looking to insects for solutions, particularly click beetles, which can jump up to 20 times their body length without using their legs3.

Click beetles use a muscle to compress soft tissue, building up energy; a latch system holds the compressed tissue in place. When the animal releases the latch, producing its characteristic clicking sound, the tissue expands rapidly and the beetle is launched into the air, accelerating at about 530 times the force of gravity. (By comparison, a rider on a roller coaster typically experiences about four times the force of gravity.) If a robot could do that, it would have a mechanism for righting itself after tipping over, says Aimy Wissa, a mechanical and aerospace engineer who runs the Bio-inspired Adaptive Morphology Lab at Princeton.

Even more interesting, Wissa says, is that the beetle can perform this manoeuvre four or five times in rapid succession, without suffering any apparent damage. She’s trying to develop models that explain how the energy is rapidly dissipated without harming the insect, which could prove useful in applications involving rapid acceleration and deceleration, such as bulletproof vests. Other creatures also store and release energy to trigger rapid motion, including fruit-fly larvae and Venus flytraps (Dionaea muscipula), and understanding how they do so could lead to more-responsive artificial muscles, Tolley says.

Totally legless

In some places, such as narrow underground passages or on unstable surfaces, legs could require too much space or be too unstable to propel a robot. Howie Choset, a computer scientist at the Robotics Institute of Carnegie Mellon University in Pittsburgh, Pennsylvania, builds snake-like robots with 16 joints that provide a range of motion that could drive everything from surgical instruments wending through the body to reconnaissance robots exploring archaeological sites.

In one early project, Choset took his robo-snakes to the Red Sea, where ancient Egyptians had dug caves to store boats that they’d built for trade with the Land of Punt, thought to be located in modern Somalia. The caves were no longer safe for human explorers, but snake robots seemed well suited to the task — until they didn’t. “The truth is, we got stuck,” Choset says. “We couldn’t go up and down the sandy inclines.”

To work out how a real snake would approach the problem, Choset looked to sidewinders, snakes that move by thrusting their bodies sideways in an S-shaped curve, gliding easily over sand4. Because sand is granular, it can behave as either a liquid or a solid, depending on how much force is applied. Choset found that sidewinders can exert the right amount of pushing force so that the sand remains solid underneath them and supports their bodies. “It wasn’t until we started looking at the real snakes, the sidewinders, and how they moved on sandy terrains that we were able to understand how to make our robot work on sandy terrains,” he says.

As for Wissa, she’s trying to build robots that can both swim and fly, using an animal that can do both as inspiration: flying fish5. These creatures use their pelvic fins to skim across the water’s surface and then launch into the air, where they can glide up to 400 metres.

Flying fish, Wissa explains, are “actually very good gliders”. But when they drop back to the water, they don’t submerge. “They actually just dip their caudal fin and they flap it vigorously, and then they can take off again,” Wissa says. “You can think of it as a taxiing manoeuvre.” She hopes to learn enough about this behaviour to develop a robot that can move through both air and water using the same propulsion mechanisms. “We’re very good as engineers in designing things for a single function,” Wissa says. “Where nature really can teach us a lot of lessons is this concept of multi-functionality.”

For another type of multi-functional locomotion, Wissa focuses on grasshoppers, which can jump and then open their wings to glide. She hopes to understand what makes them such good gliders. Many other insects rely on high-frequency flapping to fly. Perhaps, she says, it has to do with their wing shape.

Wissa also seeks inspiration from birds. She’s used aerodynamic testing and structural modelling to investigate covert feathers — small, stiff feathers that overlap other feathers on a bird’s wings and tail6. When a bird tries to land in windy conditions, the covert feathers on the wings deploy, either passively in response to air flow or actively under control of a tendon. The covert feathers alter the shape of the wing and give the bird finer control over its interaction with air flow, and don’t require as much energy as flapping the whole wing. By learning to understand the physics of these feathers, Wissa hopes to improve the flight of a UAV.

A two-way street

Biology has informed robotics, but the engineering involved can also provide insights into animal kinesiology. “We didn’t start by looking at biology,” Choset says. Instead, he mathematically modelled the fundamental principles of the motion he was interested in. “And in doing so, something kind of magical happened — we started coming up with ways to explain how biology works. So, is it robot-inspired biology or biologically inspired robots?”More from Nature Outlooks

Other engineers have had similar experiences. Nagpal is collaborating with ichthyologist George Lauder at Harvard University in Cambridge to model the hydrodynamics of schooling, to see whether the formation provides living fish with an energy benefit. And designs that make drones fly in a more energy-efficient way might help to explain how birds and insects have evolved to do something similar. Wissa hopes her work, in addition to building flying, swimming robots, will lead to a greater understanding of flying fish. “We’re using this model to actually test hypotheses about nature, about why some species of flying fish have enlarged pelvic fins while others don’t,” Wissa says.

But despite the links between biology and engineering, don’t expect bio-inspired robots to ultimately look like the creatures that influenced them. Wissa says that, although many first attempts at mimicking biology resemble the original biological forms, scientists’ ultimate aim is to understand the principles behind how the systems operate, and then adapt those to different structures and materials. “We’re just copying the physics and the rules for how things work,” she says, “and then making engineering systems that serve the same function.”

doi: https://doi.org/10.1038/d41586-022-03014-x

This article is part of Nature Outlook: Robotics and artificial intelligence, an editorially independent supplement produced with the financial support of third parties. About this content.

References

- Berlinger, F., Gauci, M. & Nagpal, R. Sci. Robot. 6, eabd8668 (2021).Article PubMed Google Scholar

- Drotman, D., Jadhav, S., Sharp, D., Chan, C. & Tolley, M. T. Sci. Robot. 6, eaay2627 (2021).Article PubMed Google Scholar

- Bolmin, O. et al. Proc. Natl Acad. Sci. USA 118, e2014569118 (2021).Article PubMed Google Scholar

- Chaohui Gong, R., Hatton, L. & Choset, H. In 2012 IEEE International Conference on Robotics and Automation 4222–4227 (2012).

- Saro-Cortes, V. et al. Integr. Comp. Biol. https://doi.org/10.1093/icb/icac101 (2022).Article Google Scholar

- Duan, C. & Wissa, A. Bioinspir. Biomim. 16, 046020 (2021).Article Google Scholar

This article was authored by Anthony King and originally published to Nature

Cancer drugs usually take a scattergun approach. Chemotherapies inevitably hit healthy bystander cells while blasting tumours, sparking a slew of side effects. It is also a big ask for an anticancer drug to find and destroy an entire tumour — some are difficult to reach, or hard to penetrate once located.

A long-dreamed-of alternative is to inject a battalion of tiny robots into a person with cancer. These miniature machines could navigate directly to a tumour and smartly deploy a therapeutic payload right where it is needed. “It is very difficult for drugs to penetrate through biological barriers, such as the blood–brain barrier or mucus of the gut, but a microrobot can do that,” says Wei Gao, a medical engineer at the California Institute of Technology in Pasadena.

Part of Nature Outlook: Robotics and artificial intelligence

Among his inspirations is the 1966 film Fantastic Voyage, in which a miniaturized submarine goes on a mission to remove a blood clot in a scientist’s brain, piloted through the bloodstream by a similarly shrunken crew. Although most of the film remains firmly in the realm of science fiction, progress on miniature medical machines in the past ten years has seen experiments move into animals for the first time.

There are now numerous micrometre- and nanometre-scale robots that can propel themselves through biological media, such as the matrix between cells and the contents of the gastrointestinal tract. Some are moved and steered by outside forces, such as magnetic fields and ultrasound. Others are driven by onboard chemical engines, and some are even built on top of bacteria and human cells to take advantage of those cells’ inbuilt ability to get around. Whatever the source of propulsion, it is hoped that these tiny robots will be able to deliver therapies to places that a drug alone might not be able to reach, such as into the centre of solid tumours. However, even as those working on medical nano- and microrobots begin to collaborate more closely with clinicians, it is clear that the technology still has a long way to go on its fantastic journey towards the clinic.

Poetry in motion

One of the key challenges for a robot operating inside the human body is getting around. In Fantastic Voyage, the crew uses blood vessels to move through the body. However, it is here that reality must immediately diverge from fiction. “I love the movie,” says roboticist Bradley Nelson, gesturing to a copy of it in his office at the Swiss Federal Institute of Technology (ETH) Zurich in Switzerland. “But the physics are terrible.” Tiny robots would have severe difficulty swimming against the flow of blood, he says. Instead, they will initially be administered locally, then move towards their targets over short distances.

When it comes to design, size matters. “Propulsion through biological media becomes a lot easier as you get smaller, as below a micron bots slip between the network of macromolecules,” says Peer Fischer, a robotics researcher at the Max Planck Institute for Intelligent Systems in Stuttgart, Germany. Bots are therefore typically no more than 1–2 micrometres across. However, most do not fall below 300 nanometres. Beyond that size, it becomes more challenging to detect and track them in biological media, as well as more difficult to generate sufficient force to move them.

Scientists have several choices for how to get their bots moving. Some opt to provide power externally. For instance, in 2009, Fischer — who was working at Harvard University in Cambridge, Massachusetts, at the time, alongside fellow nanoroboticist Ambarish Ghosh — devised a glass propeller, just 1–2 micrometres in length, that could be rotated by a magnetic field1. This allowed the structure to move through water, and by adjusting the magnetic field, it could be steered with micrometre precision. In a 2018 study2, Fischer launched a swarm of micropropellers into a pig’s eye in vitro, and had them travel over centimetre distances through the gel-like vitreous humour into the retina — a rare demonstration of propulsion through real tissue. The swarm was able to slip through the network of biopolymers within the vitreous humour thanks in part to a silicone oil and fluorocarbon coating applied to each propeller. Inspired by the slippery surface that the carnivorous pitcher plant Nepenthes uses to catch insects, this minimized interactions between the micropropellers and biopolymers.

Another way to provide propulsion from outside the body is to use ultrasound. One group placed magnetic cores inside the membranes of red blood cells3, which also carried photoreactive compounds and oxygen. The cells’ distinctive biconcave shape and greater density than other blood components allowed them to be propelled using ultrasonic energy, with an external magnetic field acting on the metallic core to provide steering. Once the bots are in position, light can excite the photosensitive compound, which transfers energy to the oxygen and generates reactive oxygen species to damage cancer cells.

This hijacking of cells is proving to have therapeutic merits in other research projects. Some of the most promising strategies aimed at treating solid tumours involve human cells and other single-celled organisms jazzed up with synthetic parts. In Germany, a group led by Oliver Schmidt, a nanoscientist at Chemnitz University of Technology, has designed a biohybrid robot based on sperm cells4. These are some of the fastest motile cells, capable of hitting speeds of 5 millimetres per minute, Schmidt says. The hope is that these powerful swimmers can be harnessed to deliver drugs to tumours in the female reproductive tract, guided by magnetic fields. Already, it has been shown that they can be magnetically guided to a model tumour in a dish.

Credit: Leibniz IFW, Dresden

“We could load anticancer drugs efficiently into the head of the sperm, into the DNA,” says Schmidt. “Then the sperm can fuse with other cells when it pushes against them.” At the Chinese University of Hong Kong, meanwhile, nanoroboticist Li Zhang led the creation of microswimmers from Spirulina microalgae cloaked in the mineral magnetite. The team then tracked a swarm of them inside rodent stomachs using magnetic resonance imaging5. The biohybrids were shown to selectively target cancer cells. They also gradually degrade, reducing unwanted toxicity.

Another way to get micro- and nanobots moving is to fit them with a chemical engine: a catalyst drives a chemical reaction, creating a gradient on one side of the machine to generate propulsion. Samuel Sánchez, a chemist at the Institute for Bioengineering of Catalonia in Barcelona, Spain, is developing nanomotors driven by chemical reactions for use in treating bladder cancer. Some early devices relied on hydrogen peroxide as a fuel. Its breakdown, promoted by platinum, generated water and oxygen gas bubbles for propulsion. But hydrogen peroxide is toxic to cells even in minuscule amounts, so Sánchez has transitioned towards safer materials. His latest nanomotors are made up of honeycombed silica nanoparticles, tiny gold particles and the enzyme urease6. These 300–400-nm bots are driven forwards by the chemical breakdown of urea in the bladder into carbon dioxide and ammonia, and have been tested in the bladders of mice. “We can now move them and see them inside a living system,” says Sánchez.

Breaking through

A standard treatment for bladder cancer is surgery, followed by immunotherapy in the form of an infusion of a weakened strain of Mycobacterium bovis bacteria into the bladder, to prevent recurrence. The bacterium activates the person’s immune system, and is also the basis of the BCG vaccine for tuberculosis. “The clinicians tell us that this is one of the few things that has not changed over the past 60 years,” says Sánchez. There is a need to improve on BCG in oncology, according to his collaborator, urologic oncologist Antoni Vilaseca at the Hospital Clinic of Barcelona. Current treatments reduce recurrences and progression, “but we have not improved survival”, Vilaseca says. “Our patients are still dying.”

The nanobot approach that Sánchez is trying promises precision delivery. He plans to insert his bots into the bladder (or intravenously), to motor towards the cancer with their cargo of therapeutic agents to target cancer cells, using abundant urea as a fuel. He might use a magnetic field for guidance, if needed, but a more straightforward replacement of BCG with bots that do not require external control, perhaps using an antibody to bind a tumour marker, would please clinicians most. “If we can deliver our treatment to the tumour cells only, then we can reduce side effects and increase activity,” says Vilaseca.

Not all cancers can be reached by swimming through liquid, however. Natural physiological barriers can block efficient drug delivery. The gut wall, for example, allows absorption of nutrients into the bloodstream, and offers an avenue for getting therapies into bodies. “The gastrointestinal tract is the gateway to our body,” says Joseph Wang, a nanoengineer at the University of California, San Diego. However, a combination of cells, microbes and mucus stops many particles from accessing the rest of the body. To deliver some therapies, simply being in the intestine isn’t enough — they also need to be able to burrow through its defences to reach the bloodstream, and a nanomachine could help with this.

In 2015, Wang and his colleagues, including Gao, reported the first self-propelled robot in vivo, inside a mouse stomach7. Their zinc-based nanomotor dissolved in the harsh stomach acids, producing hydrogen bubbles that rocketed the robot forwards. In the lower gastrointestinal tract, they instead use magnesium. “Magnesium reacts with water to give a hydrogen bubble,” says Wang. In either case, the metal micromotors are encapsulated in a coating that dissolves at the right location, freeing the micromotor to propel the bot into the mucous wall.

Some bacteria have already worked out their own ways to sneak through the gut wall. Helicobacter pylori, which causes inflammation in the stomach, excretes urease enzymes to generate ammonia and liquefy the thick mucous that lines the stomach wall. Fischer envisages future micro- and nanorobots borrowing this approach to deliver drugs through the gut.

Sign up for Nature’s newsletter on robotics and AI

Solid tumours are another difficult place to deliver a drug. As these malignancies develop, a ravenous hunger for oxygen promotes an outside surface covered with blood vessels, while an oxygen-deprived core builds up within. Low oxygen levels force cells deep inside to switch to anaerobic metabolism and churn out lactic acid, creating acidic conditions. As the oxygen gradient builds, the tumour becomes increasingly difficult to penetrate. Nanoparticle drugs lack a force with which to muscle through a tumour’s fortifications, and typically less than 2% of them will make it inside8. Proponents of nanomachines think that they can do better.

Sylvain Martel, a nanoroboticist at Montreal Polytechnic in Canada, is trying to break into solid tumours using bacteria that naturally contain a chain of magnetic iron-oxide nanocrystals. In nature, these Magnetococcus species seek regions that have low oxygen. Martel has engineered such a bacterium to target active cancer cells deep inside tumours8. “We guide them with a magnetic field towards the tumour,” explains Martel, taking advantage of the magnetic crystals that the bacteria typically use like a compass for orientation. The precise locations of low-oxygen regions are uncertain even with imaging, but once these bacteria reach the right location, their autonomous capability kicks in and they motor towards low-oxygen regions. In a mouse, more than half the bacteria injected close to tumour grafts broke into this tumour region, each laden with dozens of drug-loaded liposomes. Martel cautions, however, that there is still some way to go before the technology is proven safe and effective for treating people with cancer.

In the Netherlands, chemist Daniela Wilson at Radboud University in Nijmegen and colleagues have developed enzyme-driven nanomotors powered by DNA that might similarly be able to autonomously home in on tumour cells9. The motors navigate towards areas that are richer in DNA, such as tumour cells that undergoing apoptosis. “We want to create systems that are able to sense gradients by different endogenous fuels in the body,” Wilson says, suggesting that the higher levels of lactic acid or glucose typically found in tumours could also be used for targeting. Once in place, the autonomous bots seem to be picked up by cells more easily than passive particles are — perhaps because the bots push against cells.

Fiction versus reality

Inspirational though Fantastic Voyage might have been for many working in the field of medical nanorobotics, there are some who think the film has become a burden. “People think of this as science fiction, which excites people, but on the other hand they don’t take it so seriously,” says Martel. Fischer is similarly jaded by movie-inspired hype. “People sometimes write very liberally as if nanobots for cancer treatment are almost here,” he says. “But this is not even in clinical trials right now.”

Nonetheless, advances in the past ten years have raised expectations of what is possible with current technology. “There’s nothing more fun than building a machine and watching it move. It’s a blast,” says Nelson. But having something wiggling under a microscope no longer has the same draw, without medical context. “You start thinking, ‘how could this benefit society?’” he says.

With this in mind, many researchers creating nanorobots for medical purposes are working more closely with clinicians than ever before. “You find a lot of young doctors who are really interested in what the new technologies can do,” Nelson says. Neurologist Philipp Gruber, who works with stroke patients at Aarau Cantonal Hospital in Switzerland, began a collaboration with Nelson two years ago after contacting ETH Zurich. The pair share an ambition to use steerable microbots to dissolve clots in people’s brains after ischaemic stroke — either mechanically, or by delivering a drug. “Brad knows everything about engineering,” says Gruber, “but we can advise about the problems we face in the clinic and the limitations of current treatment options.”

Sánchez tells a similar story: while he began talking to physicians around a decade ago, their interest has warmed considerably since his experiments in animals began three to four years ago. “We are still in the lab, but at least we are working with human cells and human organoids, which is a step forward,” says his collaborator Vilaseca.

As these seedlings of clinical collaborations take root, it is likely that oncology applications will be the earliest movers — particularly those that resemble current treatments, such as infusing microbots instead of BCG into cancerous bladders. But even these therapeutic uses are probably at least 7–10 years away. In the nearer term, there might be simpler tasks that nanobots can be used to accomplish, according to those who follow the field closely.

For example, Martin Pumera, a nanoroboticist at the University of Chemistry and Technology in Prague, is interested in improving dental care by landing nanobots beneath titanium tooth implants10. The tiny gap between the metal implants and gum tissue is an ideal niche for bacterial biofilms to form, triggering infection and inflammation. When this happens, the implant must often be removed, the area cleaned, and a new implant installed — an expensive and painful procedure. He is collaborating with dental surgeon Karel Klíma at Charles University in Prague.

Another problem the two are tackling is oral bacteria gaining access to tissue during surgery of the jaws and face. “A biofilm can establish very quickly, and that can mean removing titanium plates and screws after surgery, even before a fracture heals,” says Klíma. A titanium oxide robot could be administered to implants using a syringe, then activated chemically or with light to generate active oxygen species to kill the bacteria. Examples a few micrometres in length have so far been constructed, but much smaller bots — only a few hundred nanometres in length — are the ultimate aim.

Clearly, this is a long way from parachuting bots into hard-to-reach tumours deep inside a person. But the rising tide of in vivo experiments and the increasing involvement of clinicians suggests that microrobots might just be leaving port on their long journey towards the clinic.

doi: https://doi.org/10.1038/d41586-022-00859-0

This article is part of Nature Outlook: Robotics and artificial intelligence, an editorially independent supplement produced with the financial support of third parties. About this content.

References

- Ghosh, A. & Fischer, P. Nano Lett. 9, 2243–2245 (2009).PubMed Article Google Scholar

- Wu, Z. et al. Sci. Adv. 4, eaat4388 (2018).PubMed Article Google Scholar

- Gao, C. et al. ACS Appl. Mater. Interfaces 11, 23392–23400 (2019).PubMed Article Google Scholar

- Xu, H. et al. ACS Nano 12, 327–337 (2018).PubMed Article Google Scholar

- Yan, X. et al. Sci. Robot. 2, eaaq1155 (2017).PubMed Article Google Scholar

- Hortelao, A. C. et al. Sci. Robot. 6, eabd2823 (2021).PubMed Article Google Scholar

- Gao, W. et al. ACS Nano 9, 117–123 (2015).PubMed Article Google Scholar

- Felfoul, O. et al. Nature Nanotechnol. 11, 941–947 (2016).PubMed Article Google Scholar

- Ye, Y. et al. Nano Lett. 21, 8086–8094 (2021).PubMed Article Google Scholar

- Villa, K. et al. Cell Rep. Phys. Sci. 1, 100181 (2020).Article Google Scholar

This article was authored by Neil Savage and originally published to Nature

Bing Liu was road testing a self-driving car, when suddenly something went wrong. The vehicle had been operating smoothly until it reached a T-junction and refused to move. Liu and the car’s other occupants were baffled. The road they were on was deserted, with no pedestrians or other cars in sight. “We looked around, we noticed nothing in the front, or in the back. I mean, there was nothing,” says Liu, a computer engineer at the University of Illinois Chicago.

Stumped, the engineers took over control of the vehicle and drove back to the laboratory to review the trip. They worked out that the car had been stopped by a pebble in the road. It wasn’t something a person would even notice, but when it showed up on the car’s sensors it registered as an unknown object — something the artificial intelligence (AI) system driving the car had not encountered before.

Part of Nature Outlook: Robotics and artificial intelligence

The problem wasn’t with the AI algorithm as such — it performed as intended, stopping short of the unknown object to be on the safe side. The issue was that once the AI had finished its training, using simulations to develop a model that told it the differences between a clear road and an obstacle, it could learn nothing more. When it encountered something that had not been part of its training data, such as the pebble or even a dark spot on the road, the AI did not know how to react. People can build on what they’ve learnt and adapt as their environment changes; most AI systems are locked into what they already know.

In the real world, of course, unexpected situations inevitably arise. Therefore, Liu argues that any system aiming to perform learnt tasks outside a lab needs to be capable of on-the-job learning — supplementing the model it’s already developed with new data that it encounters. The car could, for instance, detect another car driving through a dark patch on the road with no problem, and decide to imitate it, learning in the process that a wet bit of road was not a problem. In the case of the pebble, it could use a voice interface to ask the car’s occupant what to do. If the rider said it was safe to continue, it could drive on, and it could then call on that answer for its next pebble encounter. “If the system can continually learn, this problem is easily solved,” Liu says.

Such continual learning, also known as lifelong learning, is the next step in the evolution of AI. Much AI relies on neural networks, which take data and pass them through a series of computational units, known as artificial neurons, which perform small mathematical functions on the data. Eventually the network develops a statistical model of the data that it can then match to new inputs. Researchers, who have based these neural networks on the operation of the human brain, are looking to humans again for inspiration on how to make AI systems that can keep learning as they encounter new information. Some groups are trying to make computer neurons more complex so they’re more like neurons in living organisms. Others are imitating the growth of new neurons in humans so machines can react to fresh experiences. And some are simulating dream states to overcome a problem of forgetfulness. Lifelong learning is necessary not only for self-driving cars, but for any intelligent system that has to deal with surprises, such as chatbots, which are expected to answer questions about a product or service, and robots that can roam freely and interact with humans. “Pretty much any instance where you deploy AI in the future, you would see the need for lifelong learning,” says Dhireesha Kudithipudi, a computer scientist who directs the MATRIX AI Consortium for Human Well-Being at the University of Texas at San Antonio.

Continual learning will be necessary if AI is to truly live up to its name. “AI, to date, is really not intelligent,” says Hava Siegelmann, a computer scientist at the University of Massachusetts Amherst who created the Lifelong Learning Machines research-funding initiative for the US Defense Advanced Research Projects Agency. “If it’s a neural network, you train it in advance, you give it a data set and that’s all. It does not have the ability to improve with time.”

Model making

In the past decade, computers have become adept at tasks such as classifying cats or tumours in images, identifying sentiment in written language, and winning at chess. Researchers might, for instance, feed the computer photos that have been labelled by humans as containing cats. The computer receives the photos, which it interprets as numerical descriptions of pixels with various colour and brightness values, and runs them through layers of artificial neurons. Each neuron has a randomly chosen weight, a value by which it multiplies the value of the input data. The computer runs the input data through the layers of neurons and checks the output data against validation data to see how accurate the results are. It then repeats the process, altering the weights in each iteration until the output reaches a high accuracy. The process produces a statistical model of the values and the placement of pixels that define a cat. The network can then analyse a new photo and decide whether it matches the model — that is, whether there’s a cat in the picture. But that cat model, once developed, is pretty much set in stone.

One way to get the computer to learn to identify many objects would be to develop lots of models. You could train one neural network to recognize cats and another to recognize dogs. That would require two data sets, one for each animal, and would double the time and computing power needed to develop each model. But suppose you wanted the computer to distinguish between pictures of cats and dogs. You would have to train a third network, either using all the original data or comparing the two existing models. Add other animals into the mix and yet more models must be developed.

Training and storing more models requires greater resources, and this can quickly become a problem. Training a neural network can take reams of data and weeks of time. For instance, an AI system called GPT-3, which learnt to produce text that sounds as if it was written by a human, required almost 15 days of training on 10,000 high-end computer processors1. The ImageNet data set, which is often used to train neural networks in object recognition, contains more than 14 million images. Depending on the subset of the total number of images that is used, it can take from a few minutes to more than a day and a half to download. Any machine that has to spend days re-learning a task each time it encounters new information will essentially grind to a halt.

One system that could make the generation of multiple models more efficient is Self-Net2, created by Rolando Estrada, a computer scientist at Georgia State University in Atlanta, and his students Jaya Mandivarapu and Blake Camp. Self-Net compresses the models, to prevent a system with a lot of different animal models from growing too unwieldy.

The system uses an autoencoder, a separate neural network that learns which parameters — such as clusters of pixels in the case of image-recognition tasks — the original neural network focused on when building its model. One layer of neurons in the middle of the autoencoder forces the machine to pick a tiny subset of the most important weights of the model. There might be 10,000 numerical values going into the model and another 10,000 coming out, but in the middle layer the autoencoder reduces that to just 10 numbers. So the system has to find the ten weights that will allow it to get the most accurate output, Estrada says.

Sign up for Nature’s newsletter on robotics and AI

The process is similar to compressing a large TIFF image file down to a smaller JPEG, he says; there’s a small loss of fidelity, but what is left is good enough. The system tosses out most of the original input data, and then saves the ten best weights. It can then use those to perform the same cat-identification task with almost the same accuracy, without having to store enormous amounts of data.

To streamline the creation of models, computer scientists often use pre-training. Models that are trained to perform similar tasks have to learn similar parameters, at least in the early stages. Any neural network learning to recognize objects in images, for instance, first needs to learn to identify diagonal and vertical lines. There’s no need to start from scratch each time, so newer models can be pre-trained with the weights that already recognize those basic features. To make models that can recognize cows or pigs or kangaroos, Estrada can pre-train other neural networks with the parameters from his autoencoder. Because all animals share some of the same facial features, even if the details of size or shape are different, such pre-training allows new models to be generated more efficiently.

The system is not a perfect way to get networks to learn on the job, Estrada says. A human still has to tell the machine when to switch tasks; for example, when to start looking for horses instead of cows. That requires a human to stay in the loop, and it might not always be obvious to a person that it’s time for the machine to do something different. But Estrada hopes to find a way to automate task switching so the computer can learn to identify characteristics of the input data and use that to decide which model it should use, so it can keep operating without interruption.

Out with the old

It might seem that the obvious course is not to make multiple models but rather to grow a network. Instead of developing two networks for recognizing cats and horses respectively, for instance, it might appear easier to teach the cat-savvy network to also recognize horses. This approach, however, forces AI designers to confront one of the main issues in lifelong learning, a phenomenon known as catastrophic forgetting. A network trained to recognize cats will develop a set of weights across its artificial neurons that are specific to that task. If it is then asked to start identifying horses, it will start readjusting the weights to make it more accurate for horses. The model will no longer contain the right weights for cats, causing it to essentially forget what a cat looks like. “The memory is in the weights. When you train it with new information, you write on the same weights,” says Siegelmann. “You can have a billion examples of a car driving, and now you teach it 200 examples related to some accident that you don’t want to happen, and it may know these 200 cases and forget the billion.”

One method of overcoming catastrophic forgetting uses replay — that is, taking data from a previously learnt task and interweaving them with new training data. This approach, however, runs head-on into the resource problem. “Replay mechanisms are very memory hungry and computationally hungry, so we do not have models that can solve these problems in a resource-efficient way,” Kudithipudi says. There might also be reasons not to store data, such as concerns about privacy or security, or because they belong to someone unwilling to share them indefinitely.

Siegelmann says replay is roughly analogous to what the human brain does when it dreams. Many neuroscientists think that the brain consolidates memories and learns things by replaying experiences during sleep. Similarly, replay in neural networks can reinforce weights that might otherwise be overwritten. But the brain doesn’t actually review a moment-by-moment rerun of its experiences, Siegelmann says. Rather, it reduces those experiences to a handful of characteristic features and patterns — a process known as abstraction — and replays just those parts. Her brain-inspired replay tries to do something similar; instead of reviewing mountains of stored data, it selects certain facets of what it has learnt to replay. Each layer in a neural network, Siegelmann says, moves the learning to a higher level of abstraction, from the specific input data in the bottom layer to mathematical relationships in the data at higher layers. In this way, the system sorts specific examples of objects into classes. She lets the network select the most important of the abstractions in the top couple of layers and replay those. This technique keeps the learnt weights reasonably stable — although not perfectly so — without having to store any previously used data at all.

Because such brain-inspired replay focuses on the most salient points that the network has learnt, the network can find associations between new and old data more easily. The method also helps the network to distinguish between pieces of data that it might not have separated easily before — finding the differences between a pair of identical twins, for example. If you’re down to only a handful of parameters in each set, instead of millions, it’s easier to spot the similarities. “Now, when we replay one with the other, we start looking at the differences,” Siegelmann says. “It forces you to find the separation, the contrast, the associations.”

Focusing on high-level abstractions rather than specifics is useful for continual learning because it allows the computer to make comparisons and draw analogies between different scenarios. For example, if your self-driving car has to work out how to handle driving on ice in Massachusetts, Siegelmann says, it might use data that it has about driving on ice in Michigan. Those examples won’t exactly match the new conditions, because they’re from different roads. But the car also has knowledge about driving on snow in Massachusetts, where it is familiar with the roads. So if the car can identify only the most important differences and similarities between snow and ice, Massachusetts and Michigan, instead of getting bogged down in minor details, it might come up with a solution to the specific, new situation of driving on ice in Massachusetts.

A modular approach

Looking at how the brain handles these issues can inspire ideas, even if they don’t replicate what’s going on biologically. To deal with the need for a neural network that can learn tasks without overwriting the old, scientists take a cue from neurogenesis — the process by which neurons are formed in the brain. A machine can’t grow parts the way a body can, but computer scientists can replicate new neurons in software by generating connections in parts of the system. Although the mature neurons have learnt to react to only certain data inputs, these ‘baby neurons’ can respond to all the input. “They can react to new samples that are fed into the model,” Kudithipudi says. In other words, they can learn from new information while the already-trained neurons retain what they’ve learnt.

Adding more neurons is just one way to enable a system to learn new things. Estrada has come up with another approach, on the basis of the fact that a neural network is only a loose approximation of a human brain. “We call the nodes in a neural network ‘neurons’. But if you see what they’re actually doing, they’re basically computing a weighted sum. It’s an incredibly simplified view of real, biological neurons, which perform all sorts of complex nonlinear signal processing.”

In an effort to mimic some of the complicated behaviours of real neurons more successfully, Estrada and his students developed what he calls deep artificial neurons (DANs)3. A DAN is a small neural network that is treated as a single neuron in a larger neural network.

DANs can be trained for one particular task — for instance, Estrada might develop one for identifying handwritten numbers. The model in the DAN is then fixed, so it can’t be changed and will always provide the same output to other neurons in the still-trainable network layers surrounding it. That larger network can go on to learn a related task, such as identifying numbers written by someone else — but the original model is not forgotten. “You end up with this general-purpose module that you can reuse for similar tasks in the future,” Estrada says. “These modules allow the system to learn to perform the new tasks in a similar way to the old tasks, so that the features are more compatible with each other over time. So that means that the features are more stable and it forgets less.”

So far, Estrada and his colleagues have shown that this technique works on fairly simple tasks, such as number recognition. But they’re trying to adapt it to more challenging problems, including learning how to play old video games such as Space Invaders. “And then, if that’s successful, we could use it for more sophisticated things,” says Estrada. It might, for instance, prove useful in autonomous drones, which are sent out with basic programming but have to adapt to new data in the environment, and will have to do any on-the-fly learning within tight power and processing constraints.

There’s a long way to go before AI can function as people do, dealing with an endless variety of ever-changing scenarios. But if computer scientists can develop the techniques to allow machines to make the continual adaptations that living creatures are capable of, it could go a long way towards making AI systems more versatile, more accurate and more recognizably intelligent.

doi: https://doi.org/10.1038/d41586-022-01962-y

This article is part of Nature Outlook: Robotics and artificial intelligence, an editorially independent supplement produced with the financial support of third parties. About this content.

References

- Patterson, D. et al. Preprint at https://arxiv.org/abs/2104.10350 (2021).

- Mandivarapu, J. K., Camp, B. & Estrada, R. Front. Artif. Intell. 3, 19 (2020).PubMed Article Google Scholar

- Camp, B., Mandivarapu, J. K. & Estrada, R. Preprint at https://arxiv.org/abs/2011.07035 (2020).

This article was authored by Marcus Woo and originally published to Nature

Fork in hand, a robot arm skewers a strawberry from above and delivers it to Tyler Schrenk’s mouth. Sitting in his wheelchair, Schrenk nudges his neck forward to take a bite. Next, the arm goes for a slice of banana, then a carrot. Each motion it performs by itself, on Schrenk’s spoken command.

For Schrenk, who became paralysed from the neck down after a diving accident in 2012, such a device would make a huge difference in his daily life if it were in his home. “Getting used to someone else feeding me was one of the strangest things I had to transition to,” he says. “It would definitely help with my well-being and my mental health.”

His home is already fitted with voice-activated power switches and door openers, enabling him to be independent for about 10 hours a day without a caregiver. “I’ve been able to figure most of this out,” he says. “But feeding on my own is not something I can do.” Which is why he wanted to test the feeding robot, dubbed ADA (short for assistive dexterous arm). Cameras located above the fork enable ADA to see what to pick up. But knowing how forcefully to stick a fork into a soft banana or a crunchy carrot, and how tightly to grip the utensil, requires a sense that humans take for granted: “Touch is key,” says Tapomayukh Bhattacharjee, a roboticist at Cornell University in Ithaca, New York, who led the design of ADA while at the University of Washington in Seattle. The robot’s two fingers are equipped with sensors that measure the sideways (or shear) force when holding the fork1. The system is just one example of a growing effort to endow robots with a sense of touch.

Part of Nature Outlook: Robotics and artificial intelligence